Shilong Liu Homepage

Hi! This is Shilong Liu, 刘世隆 in Chinese. I’m a ceil(now() - 2020.9)th year Ph.D. candidate at the Department of Computer Science and Technology, Tsinghua University, under the supervision of Prof. Lei Zhang, Prof. Hang Su, and Prof. Jun Zhu. I got my bachelor’s degree from the Department of Industrial Engineering, Tsinghua University in 2020.

I am an intern of computer vision at International Digital Economy Academy (IDEA), under the supervision of Prof. Lei Zhang.

I was a summer intern at Microsoft Research, Redmond in June to Septemeber 2023, under the supervision of Dr. Chunyuan Li and Dr. Hao Cheng. I have a close collaboration with Dr. Jianwei Yang as well.

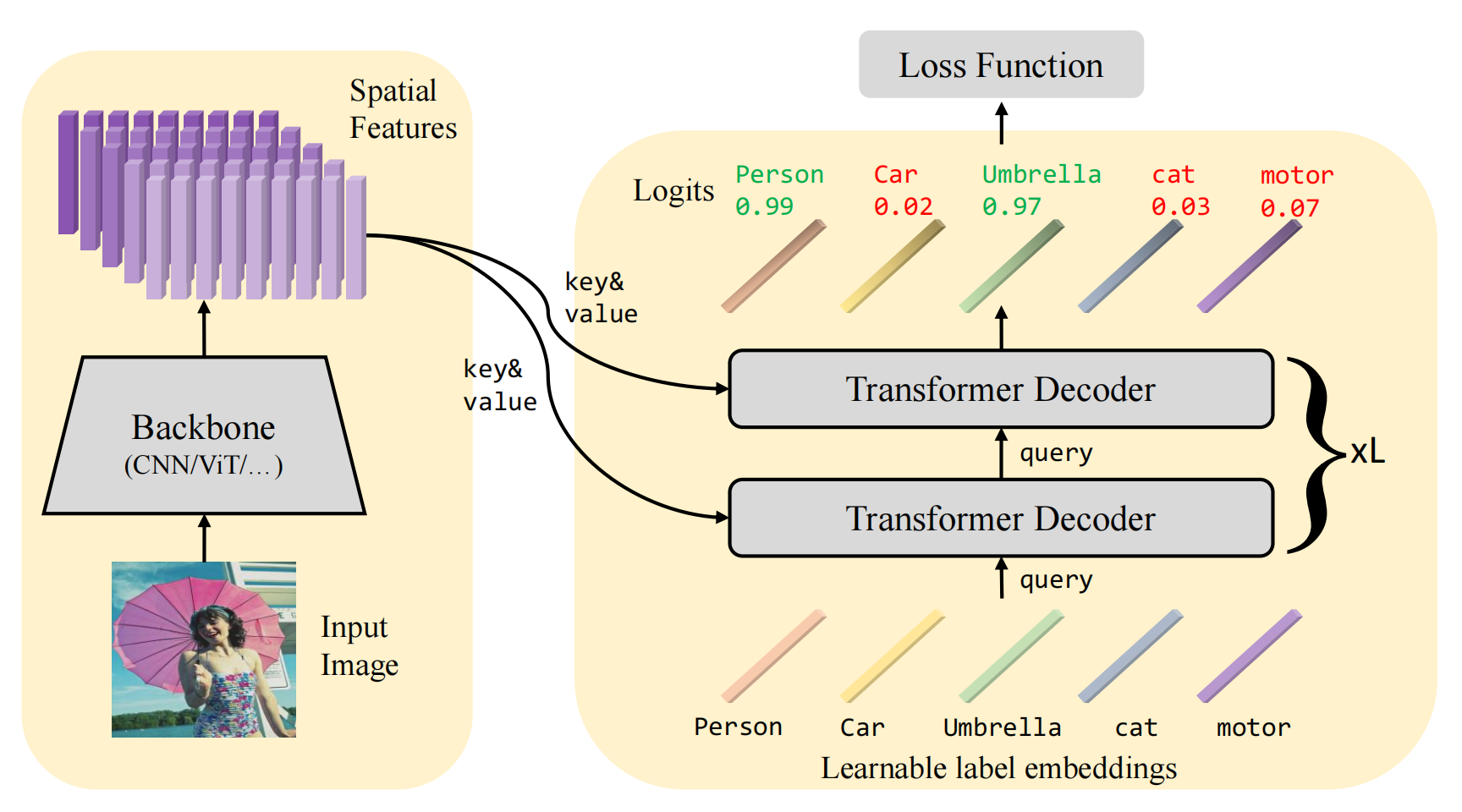

My research interest includes computer vision, object detection, and multi-modal learning.

Contact me with my email: slongliu86 AT gmail.com or liusl20 AT mails.tsinghua.edu.cn

| Google Scholar | GitHub | Zhihu 知乎 |

News

| Feb 19, 2024 | Invited talk at Rising Stars in AI Symposium 2024 at KAUST. I really enjoy the trip. |

|---|---|

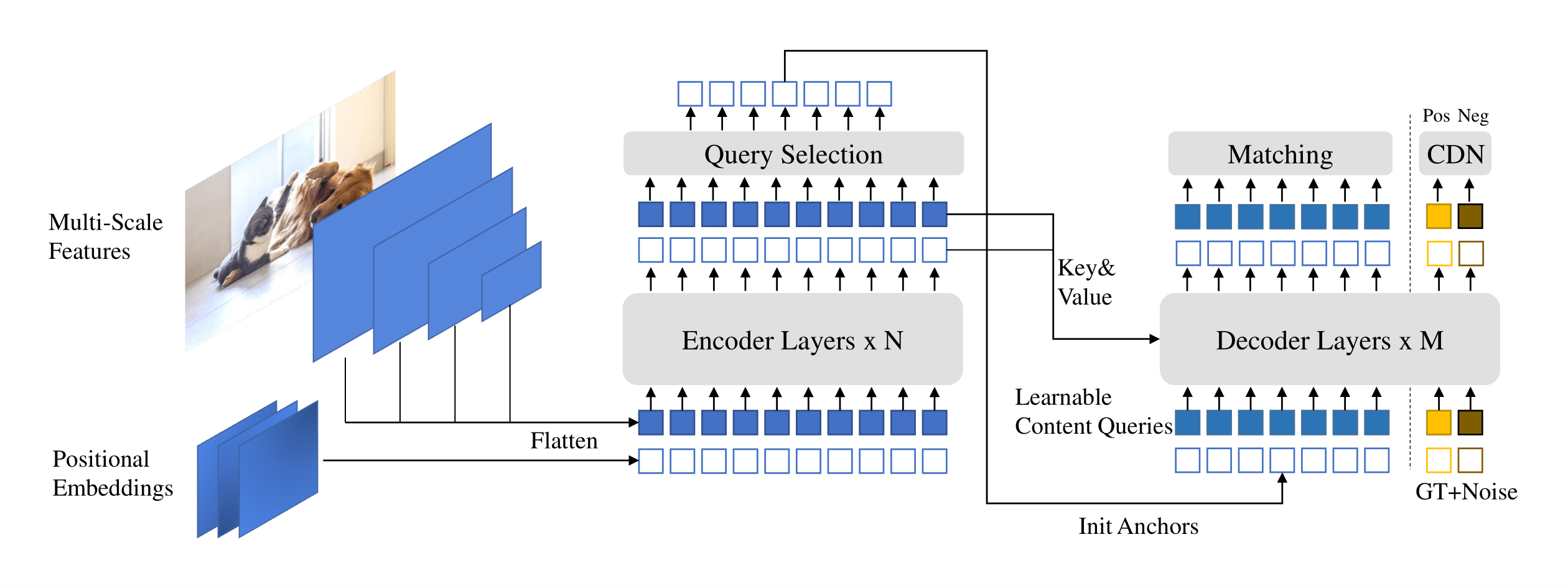

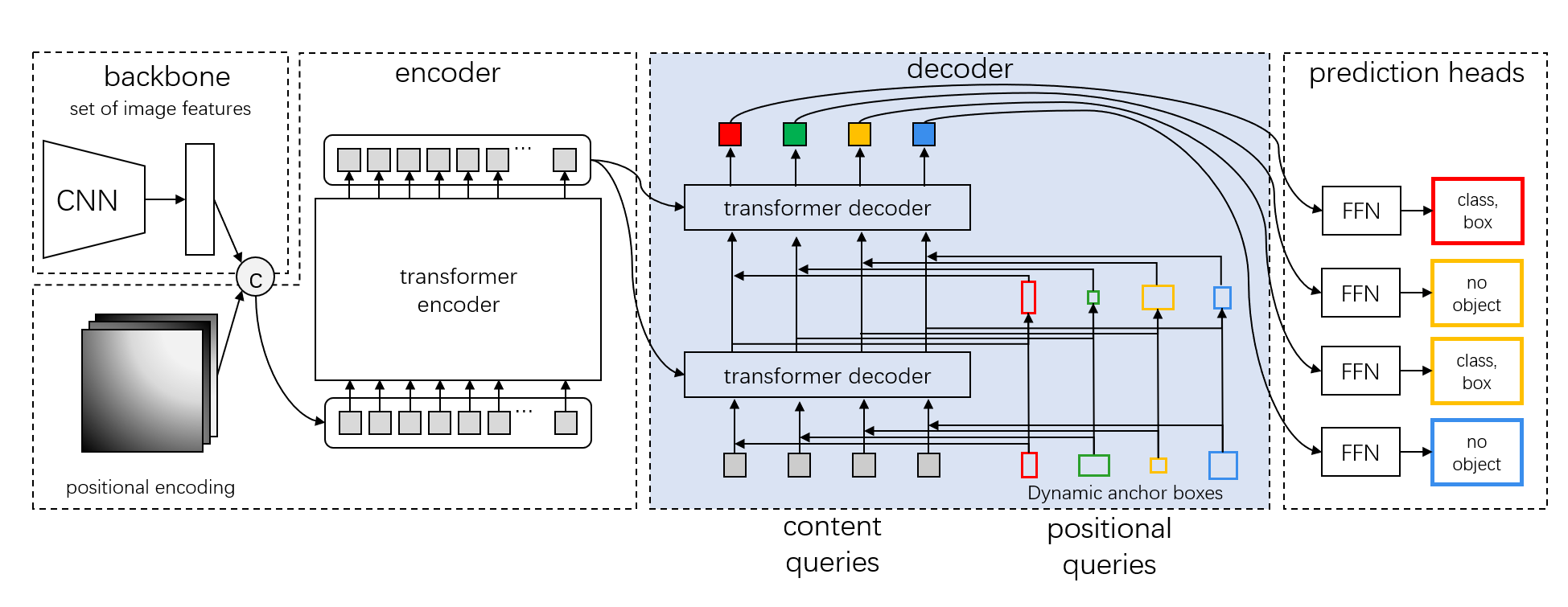

| Dec 1, 2023 | DINO and DAB-DETR are awarded as the most influential papers for ICLR 2023 and ICLR 2022, respectively. Mask DINO is selected as one of the most influential paper for CVRP 2023. |

| Nov 5, 2023 | I was awarded as the CCF-CV Rising Star Scholar 2023 (CCF-CV 学术新锐学者, 3 people per year)! Thanks to the China Computer Federation. |

| Sep 29, 2023 | Invited talks at Institute for Al Industry Research (AIR), Tsinghua University, HPC-AI Lab National University of Singapore, Gaoling School of Artificial Intelligence at Renmin University of China (RUC), and VALSE Student Webinar. |

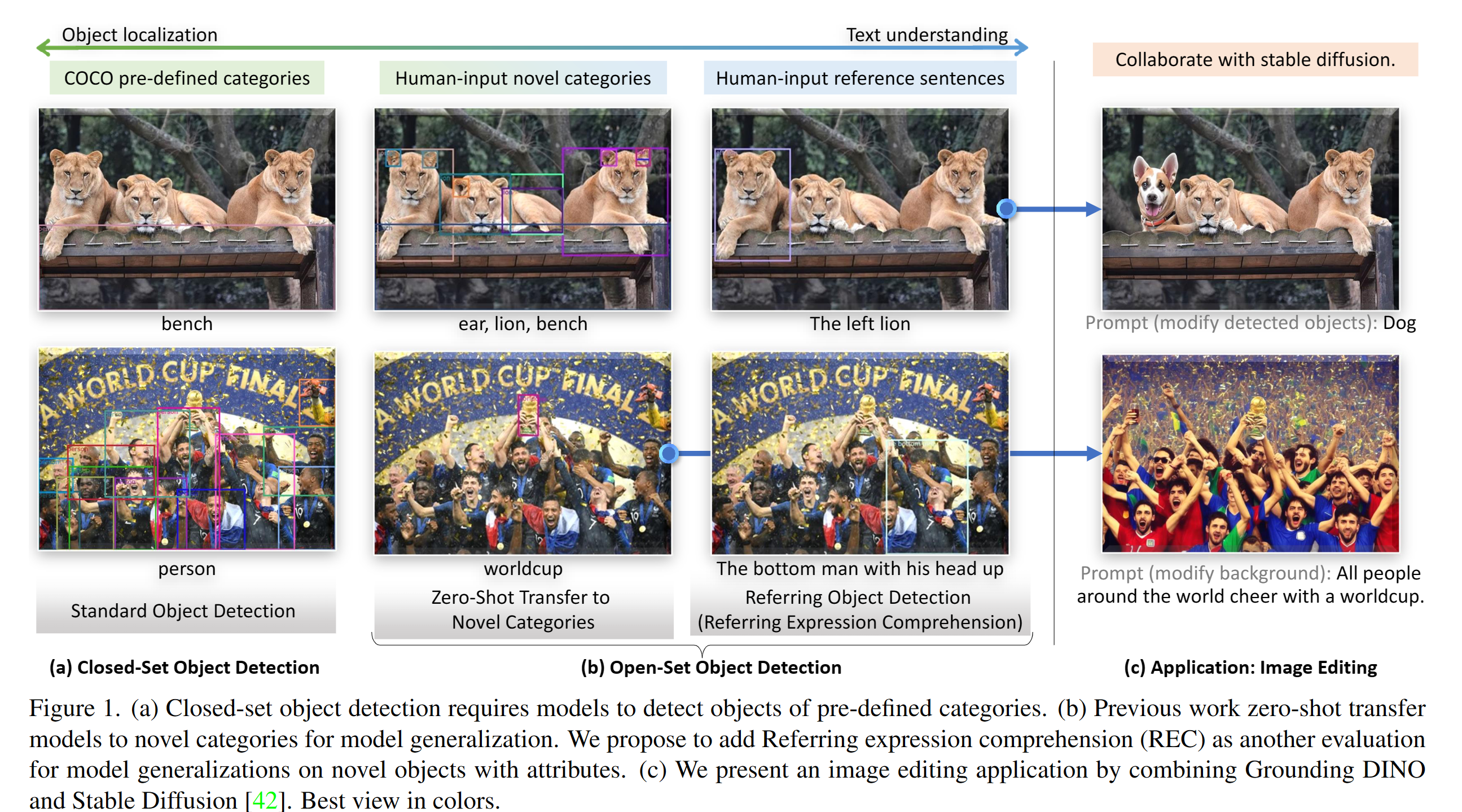

| Mar 13, 2023 | We release a strong open-set object detection model Grounding DINO that achieves the best results on open-set object detection tasks. It achieves 52.5 zero-shot AP on COCO detection, without any COCO training data! It achieves 63.0 AP on COCO after fine-tuning. Code and checkpoints will be available here. |

| Sep 22, 2022 | We release a toolbox detrex that provides state-of-the-art Transformer-based detection algorithms. It includes DINO with better performance. Welcome to use it! |